Cloud computing revolutionized AI development by making GPU resources accessible to anyone with a credit card, but in 2026 the economics have shifted dramatically. Organizations training models regularly now face monthly cloud bills exceeding the purchase price of professional workstations, while individuals experimenting with AI find per-hour charges constraining their learning and creativity. The total cost of ownership calculation between cloud rental and workstation ownership reveals a surprising reality: for continuous development workflows, local hardware pays for itself within months while providing superior performance and flexibility. ABS AI Workstations deliver professional-grade AI compute with a one-time investment that eliminates recurring charges and enables unlimited experimentation.

The Cloud Cost Reality: Running the Numbers

A typical AI development workflow in 2026 involves eight hours daily of active GPU usage: training experiments during work hours, running inference benchmarks, preprocessing datasets, and fine-tuning models. On AWS, a p4d.24xlarge instance with eight A100 GPUs costs $32.77 per hour. For comparison, a single-GPU p3.2xlarge with one V100 GPU costs $3.06 per hour—still substantial when accumulated across months of development.

Consider a machine learning team running a single-GPU instance eight hours per day, five days per week. Monthly cost reaches $489. Annual cost: $5,868. Over two years, this team spends $11,736 renting GPU access equivalent to hardware they could own outright. The ABS Zaurion Aqua with RTX Pro 6000 and 96GB VRAM provides superior capabilities to cloud V100 instances at approximately $13,000—paying for itself within 27 months of equivalent cloud usage.

The calculation becomes more dramatic for teams requiring multiple GPUs or higher utilization. A dual-GPU cloud instance running twelve hours daily costs approximately $15,000 annually. The ABS Zaurion Duo Aqua with two RTX Pro 6000 GPUs recovers its purchase price within the first year while eliminating data transfer costs, session management overhead, and the cognitive burden of monitoring hourly charges.

Cloud platforms also impose hidden costs: data egress fees when downloading trained models, storage charges for datasets and checkpoints, and premium support tiers for production workloads. An organization managing 500GB of training data and 200GB of model checkpoints pays approximately $23 monthly for storage alone—small individually, but meaningful when accumulated across teams and projects over years.

Industry Applications: Who Benefits Most from Local Workstations

Industry Applications: Who Benefits Most from Local Workstations

Certain industries and workflows derive exceptional value from owning AI workstation hardware rather than renting cloud resources. The common thread: continuous, iterative development where local access and data sovereignty matter more than elastic scaling.

Visual Effects and Film Production

VFX studios creating neural radiance field (NeRF) renderings for film and television process terabytes of camera footage locally. A typical feature film VFX sequence requires training multiple NeRF models on proprietary footage that cannot leave the studio network for security and copyright reasons. Cloud workflows require uploading hundreds of gigabytes per project—time-consuming and expensive with data egress fees.

Studios working on multiple concurrent projects provision ABS AI Workstations for their VFX artists, enabling real-time previews of AI-assisted compositing and denoising. The ABS Zaurion Ruby with 128GB system RAM and dual RTX Pro GPUs handles real-time inference for style transfer and super-resolution upscaling, eliminating the render farm queues that delay creative iteration. One mid-size VFX studio calculated that three workstations replaced cloud spending of $72,000 annually while improving artist productivity through faster feedback loops.

Medical Imaging and Diagnostic AI

Healthcare institutions developing diagnostic AI models work with sensitive patient data subject to HIPAA compliance requirements. Cloud processing introduces data sovereignty concerns and compliance complexity that local workstations eliminate entirely. Radiology departments training models for tumor detection, organ segmentation, and anomaly identification process millions of DICOM images that never need to leave hospital networks.

A research hospital deploying AI for automated CT scan analysis provisioned workstations for their data science team rather than cloud infrastructure. The professional GPUs provide consistent inference latency for real-time clinical decision support, while local processing ensures patient data remains within hospital systems. The hospital’s IT department noted that workstation ownership simplified HIPAA audits compared to cloud vendor compliance documentation.

Financial Modeling and Algorithmic Trading

Quantitative trading firms training reinforcement learning models for market prediction require high-frequency model updates and backtesting across decades of historical data. Latency matters: uploading market data to cloud instances introduces delays that compound across thousands of training iterations. Local workstations with high-speed NVMe storage stream historical price data to GPUs at speeds cloud network connections cannot match.

One quantitative hedge fund calculated that local workstations reduced their model training iteration time by 40% compared to cloud workflows, enabling more frequent strategy refinements and faster response to market regime changes. The firm’s infrastructure team emphasized that owning hardware eliminated cloud vendor outages as failure points during critical trading periods. When milliseconds matter, local compute provides reliability advantages beyond cost savings.

Game Development and Procedural Content Generation

Game studios integrating AI for procedural level generation, NPC behavior modeling, and asset creation iterate constantly during development cycles spanning years. A AAA game studio might train hundreds of experimental models during pre-production, testing different approaches for procedural dungeon generation or dynamic difficulty adjustment. Cloud costs for this exploration phase would exceed $200,000 across a two-year development cycle.

Studios equip their AI research teams with workstations enabling unlimited experimentation without budget constraints. The ABS Zaurion Duo Ruby supports parallel training of multiple model variants, allowing game designers to compare different AI approaches interactively rather than queuing cloud jobs. One indie studio noted that workstation ownership transformed their AI experimentation from a budgeted line item requiring approval into a creative tool available whenever inspiration struck.

Configuration Tiers: Matching Investment to Workload

Configuration Tiers: Matching Investment to Workload

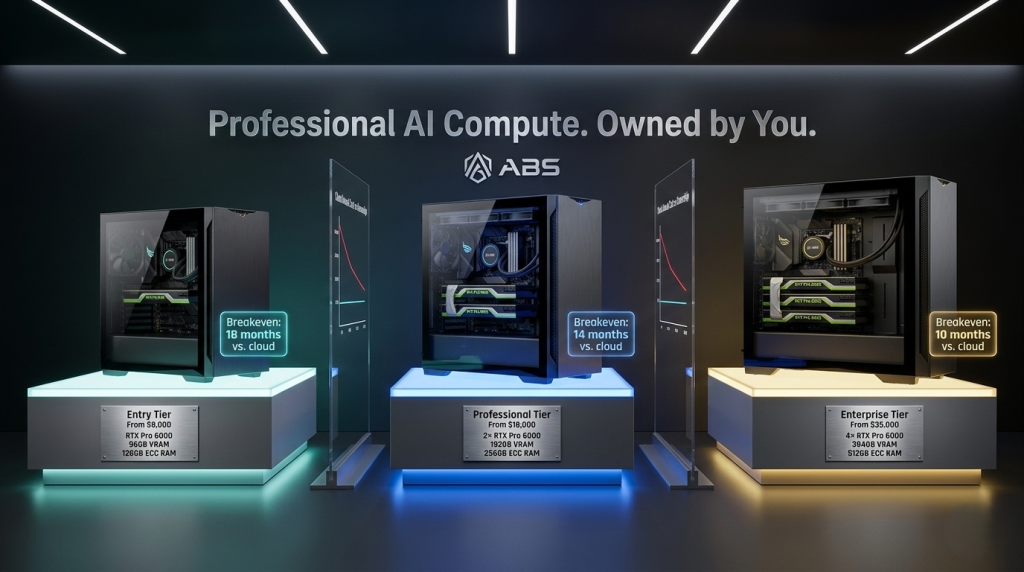

Not every AI workflow requires flagship hardware. ABS offers configurations spanning entry-level to enterprise, allowing organizations to match investment to actual computational requirements rather than over-provisioning for peak loads.

Entry Tier: Individual Developers and Learning

For individuals learning AI development, experimenting with smaller models, or building portfolio projects, a single-GPU configuration with 48GB VRAM handles most workflows. Fine-tuning pre-trained models under 10 billion parameters, training computer vision models on standard datasets like ImageNet, and running inference for production applications all work reliably within these specifications.

Entry configurations pair professional GPUs with sufficient system memory and storage for typical development datasets. A system with 128GB RAM and 2TB NVMe storage supports multiple concurrent projects and maintains complete development environments locally. This tier costs approximately $8,000–$10,000 and replaces cloud spending that would accumulate to equivalent amounts within 18–24 months of regular development work.

Professional Tier: ML Engineering Teams

Machine learning teams training larger models, running A/B tests across multiple model architectures, or supporting several concurrent projects benefit from dual-GPU configurations. The ABS Zaurion Duo configurations provide near-linear scaling for data parallel training, effectively halving training time for most workloads.

Professional tier systems include 256GB ECC memory for data integrity during multi-day training runs and redundant storage protecting against checkpoint loss. Teams provisioning one workstation per two engineers achieve high GPU utilization while providing backup capacity during peak development periods. This tier ranges from $18,000–$25,000 per system and typically recovers cost within the first year for teams previously using cloud infrastructure regularly.

Enterprise Tier: Research Labs and Production Training

Organizations at the frontier of AI research, training state-of-the-art models, or running continuous production training pipelines require maximum compute density. Quad-GPU configurations with NVLink enable model parallelism strategies that distribute individual models across multiple GPUs, supporting parameter counts in the hundreds of billions when combined with efficient architecture designs.

The ABS Zaurion Pro provides enterprise capabilities with professional support, extended warranty options, and validated configurations for specific frameworks and model architectures. Research institutions note that quad-GPU workstations deliver approximately 70% of the training throughput of eight-GPU cloud instances at 25% of the annual operating cost, with superior reliability for long-running experiments. Enterprise systems range from $35,000–$50,000 and serve as shared resources for research teams, typically supporting 4–6 researchers working on complementary projects.

Total Cost of Ownership Beyond Hardware Price

Comparing workstation purchase price to cloud rental costs captures the primary economic calculation, but total cost of ownership includes additional factors that favor local hardware more strongly than initial analysis suggests.

Productivity and Iteration Speed

Cloud development workflows introduce friction at multiple points: uploading datasets before training, downloading checkpoints for inference testing, managing instance lifecycles, and monitoring quota limits. These small delays accumulate across hundreds of development iterations. Engineers working locally save 15–30 minutes per day by eliminating upload waits and session management, translating to approximately 60–120 hours annually per developer.

Valuing this time at typical ML engineer compensation ($150K–$200K annually corresponds to roughly $75–$100 hourly cost), productivity gains alone justify $4,500–$12,000 annually per engineer. For a three-person team, productivity improvements exceed $13,500–$36,000 annually—often sufficient to amortize workstation hardware costs independently of direct cloud cost savings.

Data Transfer and Storage Costs

Cloud platforms charge for data egress when downloading trained models, with rates typically $0.08–$0.12 per GB after free tier allowances. Organizations downloading 1TB monthly of checkpoints and inference data pay approximately $1,000 annually in transfer fees alone. Local workstations eliminate these charges entirely while providing faster access to results through local network speeds exceeding cloud download bandwidth.

Long-term storage costs also accumulate. Cloud object storage charges $0.023 per GB monthly for standard tiers. An organization maintaining 10TB of historical datasets and model versions pays approximately $2,760 annually for storage. A workstation equipped with enterprise NAS storage provides equivalent capacity with one-time hardware cost and no recurring charges.

Depreciation and Resale Value

Workstation hardware retains value as computational equipment even as newer generations arrive. A three-year-old professional GPU continues to serve fine-tuning, inference, and development workloads effectively, allowing organizations to repurpose hardware into secondary roles rather than treating costs as sunk. Depreciation for tax purposes provides additional financial benefits that cloud operational expenses lack.

Organizations upgrading to newer hardware generations can resell previous workstations to recoup 30%–50% of original purchase price, effectively reducing net cost further. A workstation purchased for $15,000 and sold three years later for $6,000 represents a net investment of $9,000—equivalent to just 18 months of moderate cloud usage at $500 monthly.

Build vs. Buy: Why Pre-Built Systems Win on TCO

Build vs. Buy: Why Pre-Built Systems Win on TCO

Technically sophisticated organizations sometimes consider building custom workstations from individual components rather than purchasing pre-configured systems. The component cost savings appear attractive initially, but total cost of ownership calculations favor pre-built systems when accounting for validation time, support overhead, and opportunity costs.

Integration Time and Troubleshooting

Building a custom AI workstation requires researching component compatibility, validating BIOS settings for multi-GPU configurations, debugging driver conflicts, and testing framework installation across the specific hardware combination. Organizations building their first AI workstation typically invest 40–80 hours in the process—time that could be spent developing models. At engineer rates of $75–$100 hourly, this represents $3,000–$8,000 in labor costs that pre-configured systems eliminate.

Ongoing support costs compound over time. When training failures occur, custom-built systems require determining whether issues stem from hardware configuration, driver versions, BIOS settings, or software bugs. ABS AI Workstations ship with validated configurations and technical support from engineers who understand AI workloads, accelerating problem resolution and reducing downtime.

Warranty and Support Value

Component warranties require managing multiple vendors for GPU, motherboard, CPU, and storage, each with different RMA processes and support quality. When a GPU fails during a critical project deadline, obtaining replacement hardware through consumer RMA channels introduces delays of days to weeks. Pre-built systems offer coordinated warranty support with single points of contact and often expedited replacement for mission-critical components.

Support value increases for organizations without dedicated IT infrastructure teams. Small research labs, startups, and individual professionals benefit from expert assistance when upgrading firmware, optimizing training performance, or diagnosing unexpected behavior. The peace of mind knowing that configuration issues have knowledgeable support resources reduces risk and allows teams to focus on their core AI development work rather than hardware troubleshooting.

2026 Market Trends Favoring Local Compute

Several industry trends in 2026 strengthen the economic case for workstation ownership over cloud rental, suggesting that the TCO advantage of local compute will increase rather than diminish in coming years.

Cloud Price Increases

Major cloud providers raised GPU instance pricing by 15%–25% in 2025–2026 as demand for AI compute exceeded supply. This trend continues as training larger models becomes industry standard. Meanwhile, workstation GPU prices have stabilized as production volume increased, creating wider economic separation between ownership and rental. Organizations locked into cloud contracts face recurring price increases, while workstation owners insulate themselves from market fluctuations.

Model Efficiency Improvements

Research advances in model architecture efficiency, quantization techniques, and mixed-precision training enable larger models to train on fewer GPUs with less memory. Models that required eight cloud GPUs in 2024 now train effectively on dual or quad workstation configurations. As efficiency continues improving, the threshold where local compute becomes economical drops from large-scale training toward mid-size model development.

Data Sovereignty Regulations

Increasing data privacy regulations in healthcare, finance, and European markets create compliance complexities for cloud processing of sensitive data. Organizations subject to GDPR, HIPAA, or industry-specific data localization requirements find local workstations simplify compliance by keeping data within controlled environments. As regulatory requirements tighten, the non-financial advantages of local compute strengthen the overall value proposition.

Sustainable Computing Initiatives

Organizations measuring carbon footprint and sustainability metrics increasingly question the efficiency of cloud data centers powered by grids with varying renewable energy percentages. Local workstations allow organizations to control their compute’s power source, integrate with on-site solar installations, and make transparent sustainability decisions. While not primarily economic, sustainability considerations influence procurement decisions for organizations with environmental commitments.

Making the Investment Decision

Making the Investment Decision

The economic case for workstation ownership becomes compelling when several conditions align: regular GPU usage (4+ hours daily), multi-month project timelines, data sovereignty requirements, or team sizes where shared resources achieve high utilization. Organizations matching these criteria should calculate their specific breakeven timeline by comparing current cloud spending to workstation configurations supporting their workloads.

For individual developers, the calculation balances learning investment against future career value. An aspiring ML engineer spending $300 monthly on cloud resources for learning would breakeven on a workstation purchase within approximately 30 months—a timeframe aligned with career development timelines. The ability to experiment unlimited without per-hour anxiety accelerates learning and portfolio development.

Teams should evaluate workstation provisioning ratios based on workflow patterns. One workstation per two engineers achieves excellent utilization when team members work complementary schedules or alternate between training-intensive and inference-focused tasks. Organizations can start with a single shared workstation and expand as utilization metrics justify additional capacity.

ABS AI Workstations provide configurations across the full spectrum from individual developer to enterprise research lab, enabling organizations to match investment precisely to requirements. The one-time nature of hardware investment transforms ongoing cloud expenses into capital assets that generate value across years of AI development work, fundamentally changing the economics of professional AI compute.