NVIDIA/HP Tesla V100 PG500-216 32GB HBM2 PCIe 3.0 x16 Data Center AI Deep Learning HPC Accelerator GPU

- Type: GPU Computational Accelerator

- Nvidia Part Number: 699-2G500-0216-400

- HPE Part Number: P44861-001/P44899-001

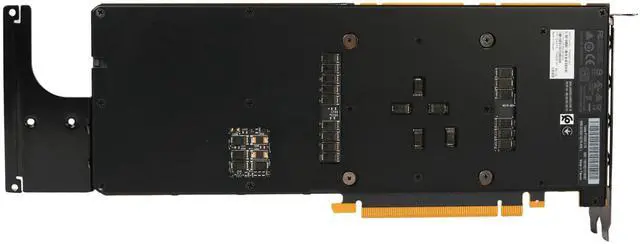

- Form Factor: Dual-Slot PCIe Full Height/Length

Key Features

- GPU Memory: 32GB HBM2 (High Bandwidth Memory 2) with ECC (Error-Correcting Code) for reliable performance in AI, HPC, and data-intensive workloads.

- CUDA Cores: 5,120 CUDA cores, delivering high parallel compute performance for deep learning, scientific computing, and simulation tasks.

- Tensor Cores: 640 first-generation Tensor Cores, accelerating AI training and inference workloads with mixed-precision computing.

- Performance: Up to ~14 TFLOPS FP32 and ~125 TFLOPS Tensor performance for AI and HPC workloads.

- Interface: PCIe 3.0 x16 for high-bandwidth server and cluster connectivity.

- Display Outputs: None; compute-only GPU with no display support.

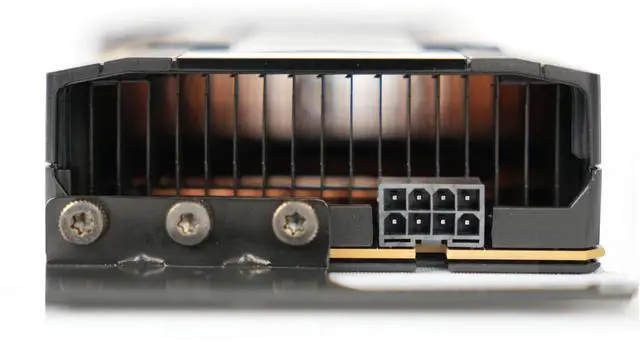

- Form Factor: Dual-slot, full-height, full-length passive cooling design for server airflow systems.

- Power Consumption: ~250W TDP via PCIe slot and auxiliary power connector

- Enterprise Features: Supports CUDA, cuDNN, and TensorRT for AI, machine learning, and HPC workloads.

Ideal Applications

- AI Model Training (Deep Learning / LLMs) -- Accelerates large-scale neural network training using Tensor Core compute for mixed-precision workloads

- High-Performance Computing (HPC) -- Used in scientific simulation, physics modeling, climate research, and engineering computations requiring massive parallel processing

- Data Analytics & Big Data Processing -- Ideal for large dataset analysis, machine learning pipelines, and GPU-accelerated database workloads

- AI Inference & Research Clusters -- Supports deployment of trained AI models in research environments and multi-GPU inference systems

- Enterprise GPU Compute Nodes -- Designed for data center environments running 24/7 workloads, including cloud computing, virtualization, and AI server clusters